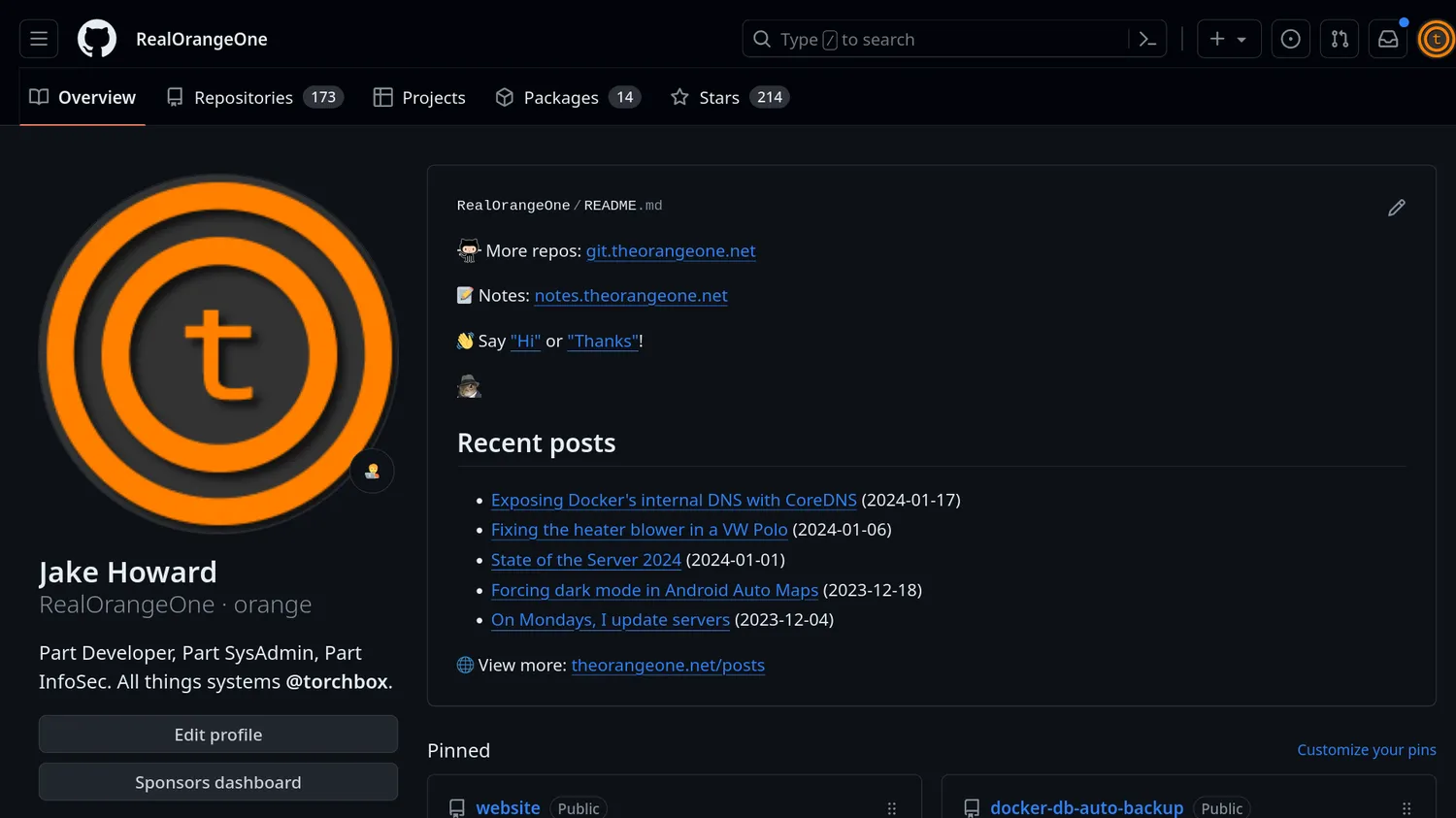

In case you didn't know, I have a blog - you're reading it now. It's not like what most people think of when they think "blog". It's guides, tales and random thoughts about the things I do, play around with or find interesting. The same can be said for the repositories on

my GitHub account - it's either tools I've built to solve a problem, things I'm playing around with, or I guess stuff to do with work.

Given how closely linked the 2 are, and how a fair few people see my GitHub profile, it'd be nice to draw interested people towards my website. I'm sure there's something here of interest to them too. I've had links between the 2 since day one, in GitHub's profile README, but that's boring!

So, one evening, I set myself a challenge. What if I could, along with the few links that are there at the moment, add a list of recent blog posts to my GitHub profile too - show visitors what I'm writing about, alongside my pinned repositories. So that's exactly what I did.

#Scraping the data

Step one: scraping the data. As humans, we're totally fine just reading the posts list page and seeing what's there. Computers on the other hand, not so much.

If this wasn't my website, I'd have to employ some brittle web scraping to get what I want. Scraping would work fine, but it's far more complex, and any changes in the page's markup could require completely rewriting the scraper. But, it is my website, so I have a lot more control.

If this was a static site, like it used to be, I might have to resort to parsing a folder structure of markdown files in a Git repository, or doing some complex templating to output a JSON file (I've done that before). Again, it'd work, but this isn't a static site, it's an almost completely custom CMS!

Instead, I leaned on the fact the posts were already sitting in a database, and just wrote a simple REST API to expose them. As my website is based on Django (well, Wagtail), I used the aptly-named Django Rest Framework. A few lines of code later, I had a performant, read-only, paginated API exposing some core details of my website's posts. The API itself is completely unauthenticated, and even has auto-generated documentation!

#Constructing the README

Now my website has an API for the latest posts, simplifying almost all the scraping needs, I need some way of getting it onto GitHub.

GitHub's profile READMEs are just that - READMEs. By creating a special repository with the same name as your username (RealOrangeOne in my case), GitHub will automatically show that repository's README on your profile, updating it whenever you change it. So, to edit the content there, I just needed to generate a README file.

In the interest of simplicity and convenience, it's a small Python script that does all the work. Using requests to fetch the posts from my API, and jinja2 to template it into a file, it's only a handful of lines of code. Run the file, and it writes out a README.md file based on the contents of the README.md.j2 file.

#Automation

With a README on my computer, it just needs to get onto GitHub. Well, that's what git is for! A simple git push later, and the README.md is on GitHub and its contents on my profile - just what I want.

However, there's a problem: occasionally I write posts, so the list needs to be able to change. Sure, I could remember to re-run the script and commit the changes, but ain't no body got time for that. Automation to the rescue of us lazy folks!

If you want to automate something on GitHub, you use GitHub Actions. Most people consider it their CI/CD offering, but it's far more powerful than that. Sure, you can trigger actions from pushes to certain branches, or users opening PRs, but you can run actions on far more than that, such as issue creation, releases, or an arbitrary schedule - which is what we need.

So, alongside the tiny Python script is a slightly larger Actions workflow which runs both on a schedule and on push (just in case I tweak something manually) which installs the dependencies (in a requirements.txt file), runs my build.py script, and commits the changes (thanks to tefanzweifel/git-auto-commit-action). The action triggers every day at 6pm (UTC, as all things should be), and if there are no changes, nothing happens.

#Result

After all that work (well, not that much work), my recent posts appear on

my GitHub profile, right at the top of the page. Sure, it's not quite as fancy as the graphs and animations other people have, but it's functional and to the point, without being messy.

There's no reason I couldn't add more in future - this pattern clearly extends to any number of other uses. The only limit is my imagination.

And, as a bonus, since I also mirror the repository to Gitea, it works there too!